To "factor out" the effects of tadpole mass. The only difference is in accounting forĮxamples: Suppose you perform an experiment in which tadpoles are raised atĭifferent temperatures, and you wish to study adult frog size. One covariable C, is a correlation between the residuals of the regression of A on C and B on C. The simplest case, a partial correlation between two variables, A and B, with Such variablesĪre called "covariables", and an analysis which factors out theirĮffects is called a "partial analysis". Sometimes you have one or more independent variables which are not of interest,īut you have to account for them when doing further analyses. Holm's method for correcting for multiple comparisons is less well-known,Īnd is also less conservative (see Legendre and Legendre, Observed p-value by the number of tests you perform. Test is very conservative, and the Scheffe test isįor the Bonferroni test, you simply multiply each There are ways to adjust for the problem of multiple comparison, the mostįamous being the Bonferroni test and the Scheffe test. If you had 20 independent tests, you would falsely reject at least one H 0ġ-(1-.05) 20 = 0.6415, or almost 2/3 of the time. Had two independent tests, you would falsely reject at least one H 0ġ-(1-.05) 2 = 0.0975, or almost 10% of the time. If H 0 isĪlways true, then you would reject it 5% of the time. The likelihood of falsely rejecting the null hypothesis. The more variables youĪdd, the more you erode your ability to test the model (e.g. The degrees of freedom in a multiple regression equals N-k-1, where k is the number of variables. Points, then you can fit the points exactly with a polynomial of degree N-1. The same holds true for polynomial regression. Packages provide "Adjusted R 2," which allows you toĬompare regressions with different numbers of variables. More variables you have, the higher the amount of variance you can explain.Įven if each variable doesn't explain much, adding a large number of variablesĬan result in very high values of R 2.

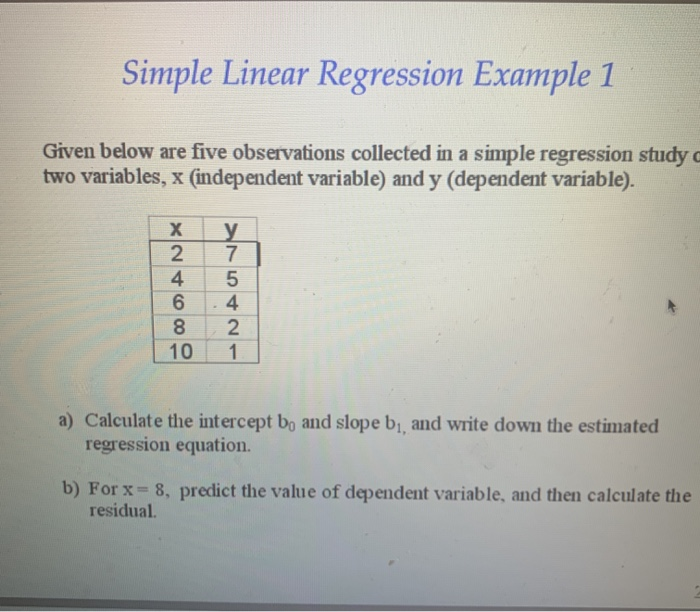

The following is an example SYSTAT output of a multiple regression: Dep Var: LOGSP N: 1412 Multiple R: 0.7565 Squared multiple R: 0.5723 Adjusted squared multiple R: 0.5717 Standard error of estimate: 0.2794 Effect Coefficient Std Error Std Coef Tolerance t P(2 Tail) CONSTANT 2.4442 0.0345 0.0. Independent variable which is quite redundant with other independent variables Variation explained by the other variables". Variable does not explain any of the variation in y, beyond the The null hypothesis is: "This independent The significance tests are conditional: This means given all the other Variable of interest and the residuals from the regression, if the variable Standardized coefficient is handy: it equals the value of r between the a standardized regression coefficient ( b if all."Adjusted multiple R 2" which will be discussedįor each variable, the following is usually provided: Of significance, and a "multiple R 2" - which isĪctually the value of r 2 for the measured y 's vs. However, the matrix solution is elegant:Īs with simple regression, the y-intercept disappears if all variablesĪlong with a multiple regression comes an overall test The estimation can still be done according the principles of linear least Line to data, we are now fitting a plane (for 2 independent variables), a space The model for a multiple regression takes the form:Īnd we wish to estimate the ß 0, ß 1, ß 2,Īre termed the "regression coefficients". Is still not considered a "multivariate" test because Problem with this is that you are putting some variables in privileged positions.Ī multiple regression allows the simultaneous testing and modeling of Variable, and then test whether a second independent variable is related to the One possible solution is to perform a regression with one independent The ageĮffect might override the diet effect, leading to a regression for diet which

ForĮxample, an animal's mass could be a function of both age and diet.

Possible that the independent variables could obscure each other's effects. We could perform regressions based on the following models:Īnd indeed, this is commonly done. Is related to more than one independent variable (e.g. However, we are often interested in testing whether a dependent variable (y) Of the squared (vertical) distances between the data points and theĬorresponding predicted values is minimized. "predicted values" or "y-hats", as estimated by the The green crosses are the actual data, and the red squares are the CCA is a special kind of multiple regression)īelow represents a simple, bivariate linear regression on a hypothetical data

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed